Fairness in AI Training Models: A Crucial Step Towards Building Trustworthy and Equitable Systems

As Artificial Intelligence (AI) continues to transform industries and revolutionize the way we live and work, concerns about fairness and bias in AI training models have become increasingly pressing. Fairness in AI training models is a critical aspect of building trustworthy and equitable systems that do not perpetuate or amplify existing social and economic inequalities.

The Need for Fairness in AI Training Models

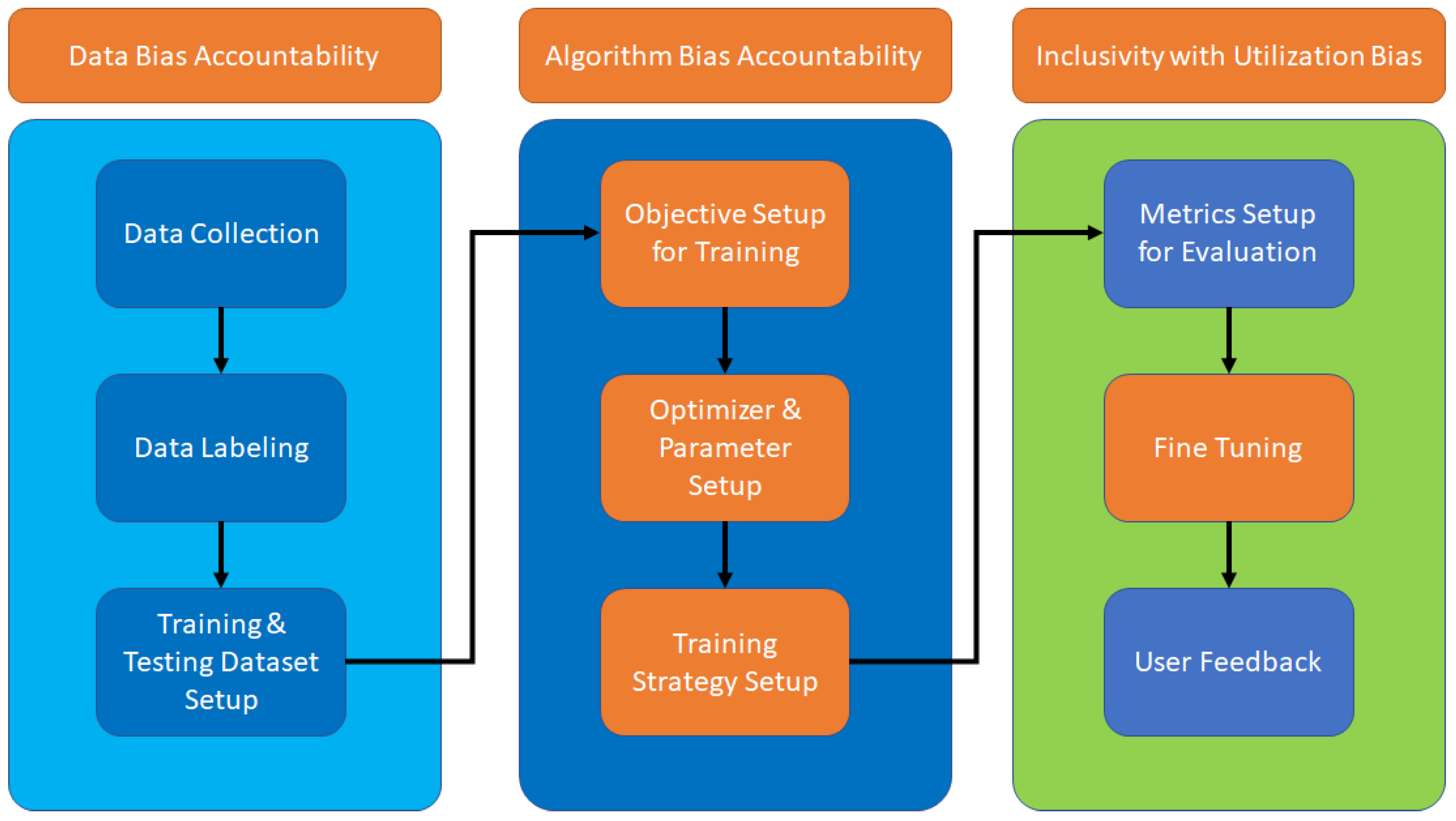

AI systems are only as good as the data they are trained on, and if this data is biased, the models will be too. Fairness in AI training models ensures that these systems do not discriminate against specific groups or individuals based on characteristics such as age, race, gender, or socioeconomic status. It is essential to recognize that fairness in AI systems is not just about avoiding harm, but also about promoting just and equitable outcomes.

Understanding Fairness in AI Training Models

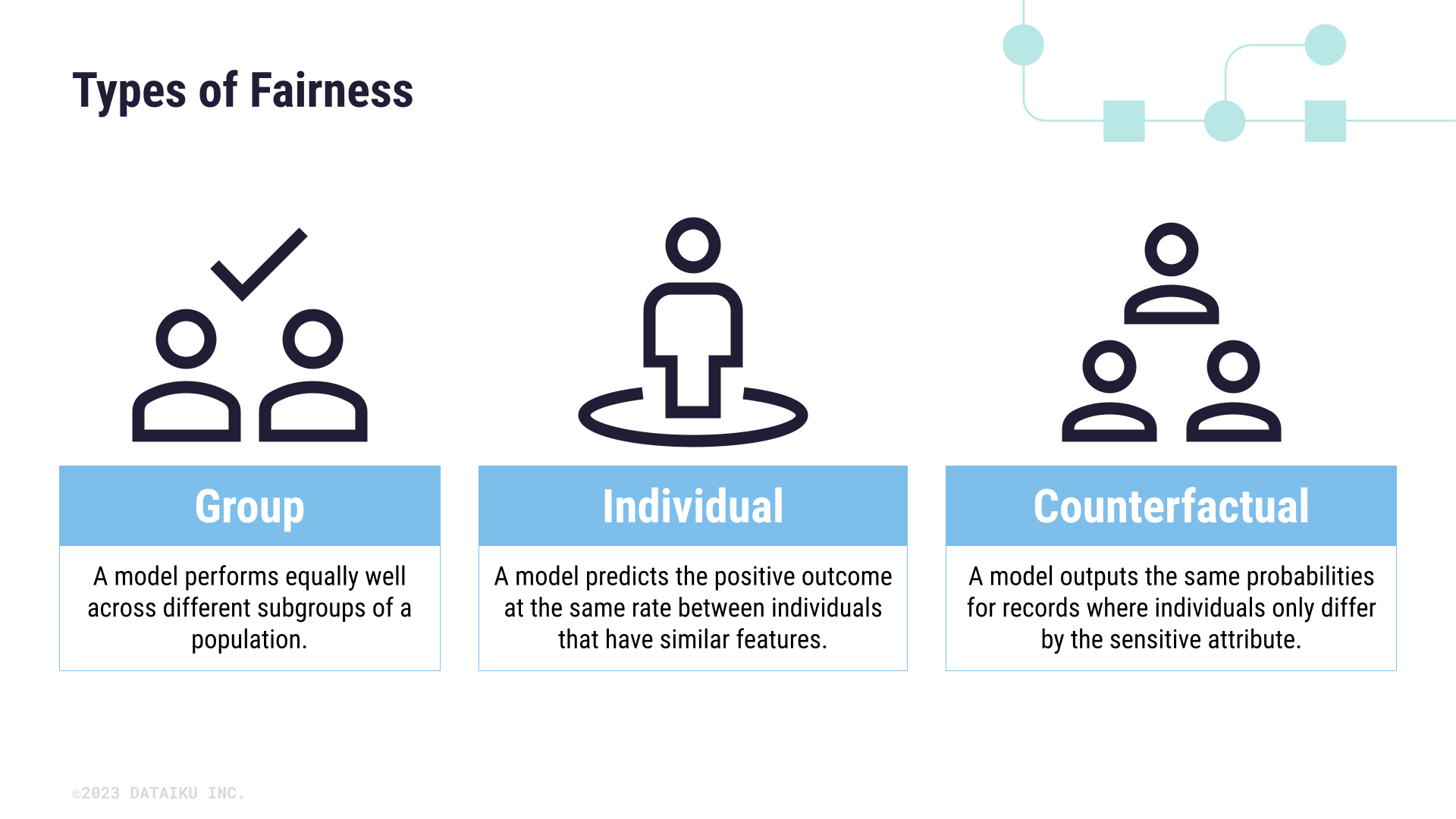

Fairness in AI training models involves several key concepts, including individual fairness, group fairness, and counterfactual fairness. Individual fairness ensures that similar individuals are treated similarly, while group fairness ensures that different groups are treated fairly. Counterfactual fairness, on the other hand, examines what would have happened if a different decision had been made.