Biased AI System Debugging: Unraveling the Mysteries of Cognitive Biases in AI

Artificial intelligence (AI) has revolutionized numerous industries, from healthcare to customer service. However, AI systems are not immune to the pitfalls of cognitive biases, which can lead to poor decision-making and unfair outcomes. Debugging biased AI systems is a critical process that requires a deep understanding of the complex relationships between data, algorithms, and human judgment. In this article, we'll dive into the world of biased AI system debugging and explore the essential strategies for identifying and mitigating the impact of cognitive biases in AI.

What are Biases in AI Systems?

Bias in AI systems refers to the systematic differences in the performance or output of AI algorithms, which can arise from various sources, including data, algorithms, and human judgment. The term "bias" can be misleading, as it implies a deliberate intent to discriminate. However, most biases in AI systems are unintentional and stem from the limitations and flaws inherent in the data, algorithms, and human decision-making processes.

Types of Biases in AI Systems

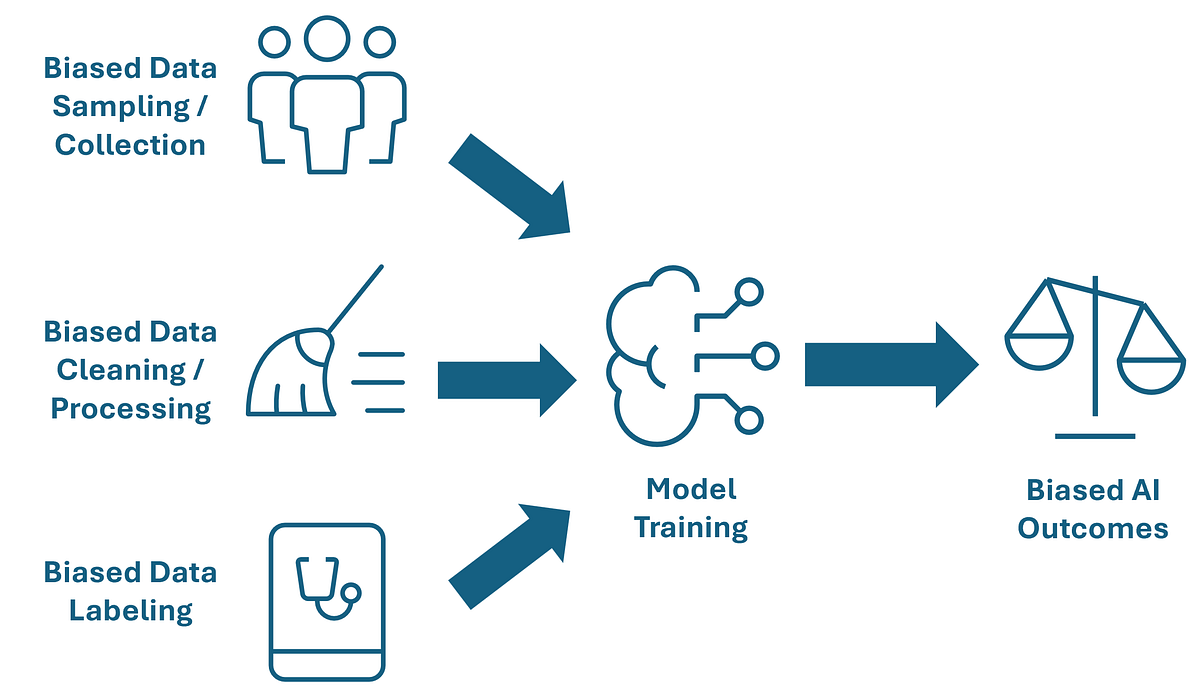

Biases in AI systems can be categorized into three main types:

- Input Bias: This type of bias arises from the quality and representativeness of the training data. If the data is incomplete, inaccurate, or lacks diversity, the AI algorithm may learn to perpetuate these flaws and produce biased outputs.

- System Bias: This type of bias occurs due to the underlying architecture and design of the AI system itself. Machine learning algorithms, for instance, can be influenced by the choice of activation functions, regularization techniques, and hyperparameters, which can lead to biased outputs.

- Application Bias: This type of bias arises from the deployment and application of AI systems in real-world scenarios. If the AI system is not adapted to the specific context or requirements of the application, it may produce suboptimal or biased results.